Journey Map

Posted by thomaslotze in Projects, Uncategorized on April 20th, 2015

In preparing for my talk on Journey at Adventure Design Group, I made a little animated map of where Journey to the End of the Night has been played.

Journey analysis, Part 4: More maps!

Posted by thomaslotze in Analytics on June 9th, 2014

This is the last in a series of analyses of the 2013 San Francisco Journey to the End of the Night registration data. It follows Part one, Part two, and part three. Here we’re examining what else we might see from location information.

First, how do we find out where people live? Area code is not terribly reliable; in this age of cell phones, much of this comes down to “where did you live when you got your first cell phone” — we have mostly 415 and 510, yes — but a lot of diversity (not surprisingly). So we’ll assume that the address people put down is an accurate representation of where they live, and use zip code to map people. If we just look at number of people coming from each zip code, we get a map like this:

Now, that tells us where most people are coming from. But, like any good mapper, we want to consider population density. Is this just a population map of the Bay Area? To find out, let’s use census data to see whether people living in a particular area are more likely to register Journey, using the same methodology we did in Part 3. We could do even better by using age-restricted census data (since we know what our approximate age range is). I leave that as an exercise to the reader.

So, if you recall, we came up with our analysis of the IFR for each zip code — of the people who registered for Journey, how likely are they to actually show up? And it looked like this:

Now, we consider the current question: from the overall population, how many register for Journey? We get a rather different look:

Yeah, a pretty low percentage. That’s about what we expect — as much as /we/ love Journey, the Bay Area is made up of tons and tons of people, and not all of them are into these weird events.

Now, are there any zip codes that stick out, in terms of how much of the population registers for Journey? It turns out there are!

Now, half a percent to two percent may not sound very impressive. But that means one out of every 50 to 200 people that live there registered for Journey! For any area, that’s pretty incredible. So, what /are/ some of those high-registering zipcodes?

I recognize some of those…let’s see it on a map.

Berkeley, Mission, SoMa: we love you too.

Finally, as a reward for making it all the way through this series, I’ve made an interactive map for you. If you want to see how the zip code you live in stacks up against the rest of the Bay Area (or the zip code your friend lives in), check it out here.

You can download the code and csv files needed for parts 3 and 4 here: journey_parts_3_and_4.zip.

And that’s all! Do you have any other ideas on useful analysis from Journey data? Thoughts on improving the analysis here? Let me know!

Journey analysis, Part 3: Can we predict who will flake?

Posted by thomaslotze in Analytics on May 23rd, 2014

This post follows (as you might expect) Part one and Part two of Journey analysis, in which we examine both /who/ registers for Journey and /when/ they register. Ultimately, though, we want to know not just how many people will *sign up* for an event, but also how many will actually *attend*. As it turns out, this proportion was much lower than we expected for Journey. About 53% of the registrants actually showed up. 47% flaked.

Note: we couldn’t match up all the waivers with registrations, so we’re missing about 6% of people who showed up. In this analysis I make the assumption that these are missing completely at random and don’t affect the results, but if you do the numbers yourself and see that it looks like less than 53% showed up, that’s why.

I know this is the Bay Area, with a pretty high reputation for flaking, and this /is/ a free event, so people may not feel as invested in actually attending, but I didn’t realize it was going to be that high. In DC, we’ve actually seen the opposite: /more/ people show up than pre-register. For this Journey, we cut off registration at 3400, to make sure that we would have enough materials for everyone who registered — and we ended up with a ton of extra, because of all the people who didn’t show. That’s the key lesson for next time: here, pre-registraton rates are much higher than attendance rates.

But what we’d really like to know is: can we figure out what that rate is likely to be /beforehand/? Can we determine what factors indicate that someone is more likely to flake, so we can predict our actual flake rate for the event?

I really, *really* wanted to have a great finding for this. Something like figuring out that people who live in the Marina flake for Journey more often, or that people living in the Mission are more reliable. Sadly, a geographic predisposition to reliability or flakiness doesn’t seem to be borne out by the data. But I can at least use this as a good example for how to do rate comparisons.

Now, we could take the naive approach: just take, for the people who signed up from each zip code, what percent of them actually showed up? Let’s call this the inverse flake ratio, or IFR. If we plot that, it looks like this:

So, for example, 94012 (Burlingame): 0% IVR. 100% flaky. Or 94119 (SoMa): 100% IVR. 0% flaky. Really tells you something about those people, right?

Well. Not really. Because, for one thing, that’s a pretty minimal sample size: only 1 person signed up from each of those zip codes. And based just on that one person, we’re judging how flaky the entire zip code is. We can do better by estimating the uncertainty we have about the true rate — when we don’t have many people who’ve signed up, we’re a lot less confident about what the rate is for that zip code. The next step would be to go through modeling this as a binomial, and using an independent uninformative beta distribution as a prior for each zip code. But we can do even better, using a hierarchical Bayesian model.

Basically what we’re saying is that there’s some distribution of IFR among different zip codes. And while we don’t know what that distribution /is/, we’re going to assume it’s approximately a Beta Distribution. Roughly, most zip codes are going to have a similar rate, with more extreme rates being less likely. It’s a way of modeling the intuition that the IFR isn’t likely to vary widely from zip code to zip code, but instead is going to vary around a more common value. As it turns out, based on this data, our distribution on zip codes’ IFR is going to look like this:

What this indicates is that there’s a relatively broad range of possible IFR across zip codes. It’s not exactly uniform — it’s more likely to have an IFR close to 0.5 than either 0 or 1 — but it is fairly broad. We don’t have a strong expectation that all IFRs are going to come from a narrow range.

So then for each individual zip code, we’re going to end up with a distribution over the possible IFRs. And we can compare that distribution to see how likely it is that people from one zip code are more or less reliable than the Bay Area in general. Geographically, our less naive map looks like this:

But it’s still a single number per zip code, when really we should have a range of uncertainty around each one. So, looking at it for more information visualization (though less geographically visual), we can plot our 95% confidence interval on the IFR for each zip code, with the x-axis being the number of registrations from that zip code. Each vertical line is the range of values we expect that zip code’s “true” IFR might be. The red lines are upper and lower bounds on what we expect the Bay Area’s mean IFR is.

That’s a little disappointing: the variances on the IFR estimates are such that we don’t see a significant flakiness change based on zip codes. All of those vertical lines cross the red lines of the Bay Area’s overall IFR. That means we can’t really make any disparaging /or/ complimentary remarks about any particular area. Perhaps that’s for the best.

We also see that the Bay Area’s overall IFR is likely below 50%, which means that, at least after signing up for Journey, fewer than 50% of registrants are expected to actually show up. That’s pretty important to remember when planning free events here. I’m very curious to try repeating this in other cities and see the comparison.

Still, let’s press on a little further. Can we try to model this somewhat differently, using some other covariates to try to predict flakiness? Maybe if we use the distance of the zip code from the Journey start, we’d see some predictive pattern?

We can do a quick check on this by using a GLM (gneralized linear model) to predict whether a registrant will show up, based on other factors we know about them. Let’s go back to our original dataset and do a logistic regression model to see if any of the following are significant factors in whether a registered person will actually sign up for Journey:

glm(formula = attended ~ signup_timestamp + distance + age +

is_facebook + is_google + is_twitter, family = "binomial",

data = registrations)

Coefficients:

Estimate Std. Error z value Pr(>|z|)

(Intercept) -1.274e+02 5.178e+01 -2.460 0.0139 *

signup_timestamp 9.243e-08 3.744e-08 2.469 0.0136 *

distance -4.400e-04 2.260e-03 -0.195 0.8456

age -5.107e-03 5.714e-03 -0.894 0.3714

is_facebookTRUE -4.590e-01 4.438e-01 -1.034 0.3010

is_googleTRUE -3.575e-01 4.436e-01 -0.806 0.4203

is_twitterTRUE -7.112e-01 4.590e-01 -1.550 0.1213

It doesn’t look like distance is actually a significant factor…or how old they are…or what signup method was used. (Although I must note that this model is only really looking for linear relationships to log-odds, and there are other relationships which could be found. While I’m not going any deeper into it now, I’d certainly be curious to hear any other investigations, and may come back to try some other machine learning techniques in the future.) However, there /does/ seem to be a slight effect where those signing up near the event are more likely to attend. We can look into that a little more using a moving average:

That’s noisy, but we can see a general upward trend. Using MARS we can generalize that into three sections: initial signups, mid-rate signups, and last-minute signups:

We can use that to get a better prediction of our actual attendance, given signups (similar to part 2). Again, to actually generalize this, we’d want data from a number of Journeys. Have I mentioned that I want more data? I always want more data.

For the next Journey, I’m currently leaning towards leaving registration open up to the time of the event, but also letting people know what our current projections are: for the number of people who will ultimately register and how many will actually attend. Just let people know that the materials are only for people with a signed waiver, and are first-come, first-serve. Having an overall idea of the range of signup-to-attendance rate is going to be pretty valuable in predicting attendance, especially if we’re considering hosting journeys of different sizes.

You can download the code and csv files needed here: journey_parts_3_and_4.zip.

Join us next time for the final section, where we look at how different zip codes sign up for and attend Journey!

Journey analysis, Part 2: Whens and How Manys

Posted by thomaslotze in Analytics on May 12th, 2014

In Part 1, I introduced Journey to the End of the Night and did some basic analysis of who signs up. Here, I’m going to look at the pattern of signups over time. Signups over time are interesting because they can potentially give us some insight into /why/ people signed up. They can also give us a way to estimate what our final registration numbers will be. Here’s the raw plot of cumulative registrations over time:

A few things to notice here. First, the jump in registrations on 10/10 — that’s right after we sent out the announcement for Journey to the journey-sf mailing list. Second, we can see that registrations really take off on 11/3, six days before the event itself; presumably this indicates that for many people, they’ve waited until the week to make their plans (however, this might be equally explained by being listed on funcheapsf.com…unfortunately, I didn’t instrument for referral source. Bad analyst. Next time. Definitely next time.)

We can also see in the final signups a daily pattern — fairly consistent registrations throughout the day, flattening out during the night, when people are asleep. I’ll be honest, I’m not sure what action to take based on that (send email blasts before people go to bed?), but it’s interesting to see.

Predicting Total Registrations

Now, the real question: can we project our ultimate number of registrations, given the registrations we’ve seen so far?

Let’s assume there’s a point, somewhere close to the event, that starts an increase in the rate of registrations. I’ll call this the “what am I doing this weekend?” point. For this Journey, it looks like this happens about six days before the event.

Trying to fit an adaptive spline model (using the earth package) comes up with those splits we noticed before: an early section, a broad middle, and an increase towards the end. It also separates out the period two weeks before the event, where we do see an increase in registration rate.

Really, the most important thing this tells us (unfortunately) is that we get a huge proportion of our signups in the week before the event. And since we only have the one event, we don’t really know if that signup rate is predictable from the earlier rate. It gives us a rough theory to be able to predict an increased rate near the game, but I wouldn’t have much confidence in that ratio holding for future games. Clearly, the answer is to increase the sample size: let’s gather data from more games and see what general predictive behavior we can find. (So yes, this means that if you’re running a game, I am interested in running signups for you and getting your data.)

One hopeful note is that slight increase in registration rate two weeks before the event. So, *if* we were going to use this as a model for predicting registrations, we’d take the rates at each of those sections, assume (big assumption!) either an additive or multiplicative change two weeks and one week before the event, and plan out the registrations we’d expect to see. We’d know a lot more in the week or two before the event, but we’ve got to order ribbons before then. One nice thing is that maps can be printed very close to game day, so getting cheap ribbon and getting extra might work, then cutting down the map printing based on a closer estimate.

We’d love to be able to do some Monte Carlo simulation of possible worlds, to get some idea of the variance in actual outcome — so that instead of just predicting a certain number of registrations, we could also give a prediction interval for the high and low possibilities. But that’s a pretty hard thing to figure out how to sample from (it’s not clear how much to oversample or undersample the registrations, and that assumption has a big impact). But if we were doing a running estimate of ultimate registrations using this segmented model, the result might look something like this, giving us our current estimate (in green) for the number of registrations as we get closer to the event:

That’s not too bad — a relatively flat line — it looks like we may be able to provide some reasonable predictions on our eventual registrations (though again with the caveat that we’re making a pretty strong and brittle assumption on how much the registrations increase two weeks before and one week before the event)

The End

You can download the cleaned registrations data and code for this post here. Join us next time for Part 3, where we ask, “Just how flaky is the Bay Area, really?”

Journey analysis, Part 1: Who signs up?

Posted by thomaslotze in Analytics on May 6th, 2014

Introduction

I’ve been involved in running an event called Journey to the End of the Night (or, in DC, “SurviveDC”) for the last several years. It’s a street game where you run through the streets seeking checkpoints and trying to avoid chasers. It’s a great game. It’s also completely free. I want to use analytics to understand better how people play the game, who plays the game, and how to run it better. I really like tracking game behavior and using it to understand and improve the experience; it’s probably time to think about the next iteration of Journey log for this purpose.

In this series, I’ll be looking at the registration data from the 2013 San Francisco Journey to the End of the Night. Because this game involves running your real-life body through the streets of a real-life city, with real-life dangers, we make everyone sign a waiver to indicate they understand those dangers. This year, we made everyone register and print those waivers beforehand.

As it happens, we can also use this registration data to understand some things about who plays Journey. We’ll explore what kind of things you might want to look for in registration data (not just for Journey, and not just for free street game experiences), the methods you can use to look for them, and how you might want to improve things based on what you find. For those who want to dig in a little deeper, I’ll go a bit into the statistical analysis, with some digressions into interesting data analysis and visualization along the way. I’ll also post the code I used, which could be used to perform the same analysis on other datasets.

Let’s get started.

Age distribution

Demographics, demographics, demographics…the median age for Journey registrants is 25, with 50% of registrants between 22 and 29, 95% between 17 and 40. And if you played Journey and are younger than 15 or older than 44 — you /are/ the one percent. The histogram can be seen here:

I don’t know much about marketing — but I do know that different channels are going to be better for different age ranges. There’s certainly a bias in the people who come out for Journey that’s affected by where the notifications have been historically; but I suspect the effect from people self-selecting is much stronger. So if you want to publicize your Journey, good places to do that will be those focusing on the 22-29 age bracket. Similarly, if we did make Journey into a commercial event, those would be the people who would be targeted.

Gender distribution

Now, we didn’t ask for gender explicitly on the signup form (because, honestly, we don’t care what you identify as). But I was still kind of curious how this breaks down. So I took the very interesting ‘gender’ package, which attempts to map names to gender likelihoods based on US birth records. I used this to map each person’s registration to a likely birth gender, based on name and birth year. Based on that analysis, Journey registration is about 40% female…which is not that far from the San Francisco overall ratio for this age range, reputedly (though I can’t find a source I think is reliable; the best I can do is Trulia or half sigma). A better comparison might be that Journey’s gender numbers are slightly worse than female participation in the Olympics — we’re at 40%, they’re at 44%.

I can hardly believe I’m making this into a plot, but:

We can also look at a breakdown by age and gender:

It looks like there’s a slight skew older for male names. We’re not going to worry too much about it, but if we wanted to check that, we could do a Kolmogorov-Smirnov test to see if the distributions were equal.

So, gone are the days where you could figure out much about someone from their email domain. Of the people registering for Journey, almost 75% used a gmail.com address. Another 9% from yahoo.com, and 4% from hotmail.com. The next highest contender is berkeley.edu, with only 1.72%, and it just gets smaller from there. This was going to be a table, but everyone likes graphs, right?

Takeaway? Make sure your Journey emails look good in gmail. If they don’t, don’t even bother. It’s worth looking at it in yahoo, too — to the tune of 10%. This was actually something we considered in the email confirmations: because we include an image of the QR code we’d later scan in for registration confirmation, we had to make sure it would print in gmail (for which I reccommend the roadie gem).

Authentication

What authentication method are people using? OMG, it’s overwhelmingly Facebook (even though everyone has gmail.com addresses). When choosing between authenticating with facebook, google, twitter, or other OpenAuth, 57% chose facebook. 38% chose google, and 5% chose twitter. I was surprised by this one, especially because almost everyone had a gmail.com email address. But in terms of doing easy identity registration, facebook authentication and google authentication were great. I feel like we need to keep OpenAuth for the open/hacker ethos…but I don’t think twitter is worth it.

The End

You can download the cleaned registrations data and R code for part 1. Come back next time for part 2, where I take a look at the timeseries of our signup rates over time.

Bandit version 0.5.0 released

Posted by thomaslotze in Uncategorized on May 5th, 2014

I’ve uploaded a new version of my bandit package to CRAN, which does computation around Bayesian Bandit A/B testing. It now includes my first contribution from another author, an implementation of the “value remaining” in an experiment, thanks to Dr. Markus Loecher. I’ve also been considering automatically implementing a hierarchical model (which I now think is significantly better than assuming even an uninformative independent prior for the arms). I am hesitant to depend on JAGS (because of the trouble I’ve sometimes seen in installing it), but I might do just that in the next version…

Value-Driven Metrics: Finding Product-Market Fit

Posted by thomaslotze in Analytics on May 31st, 2012

Everyone loves product-market fit. Â Eric Ries writes and speaks about it in the lean startup. Paul Graham simply says to make something people want. But how do we find product-market fit? How do we find out what people actually want?

As a data analyst, I ask myself: how do we quantify this and measure it?

The answer, I’m finding, is the idea of value-driven metrics.

To explain, when first building a product, people try to figure out what, out of all the possible things could be built, do people find valuable. Trying to identify product features and functionality that users will find valuable enough to come back again and again. Initially, the startup has to have some sense of what users will find useful in the site. But, startups usually have a number of useful features identified, and so they provide users a number of choices as to how to use a site.

For one example, in building an online game, users might be able to develop their resources; they could go on quests; or they could choose to interact with their friends. It would be really useful to know which of those things people actually care about: which of them will bring people back?

To shorten the discovery period, we’ve started to map all of the actions someone could take, and group them into different clusters based on the value proposition of the site. For learnist, we consider four categories: browsing (finding things of interest to learn more about); supporting boards (content) by means of comments, likes, or shares; learning from a board; or teaching by creating or developing new boards.

As Learnist users visit our site or application, we log every time they do something that might be counted as one of those actions. More importantly, we then look at the times when users are actually returning to the site. For every return visit, we look at everything measured from that visit and see which activities they attempted. We evaluate all of the return visits, to determine what percent of those return visits actually contained one of these actions.

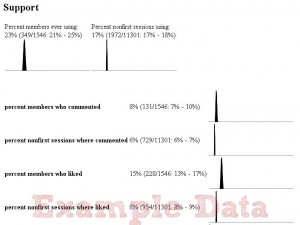

Essentially, what we’re evaluating is this: when people are coming back to the site, why are they coming back to the site? What do they find valuable? Putting this into a graphical display looks something like this (with example data):

At the top are two key metrics for user return rates overall: How many are returning the next day and ever. Then for each value we’re offering, we look at how many people are ever making use of the feature (availability) and also what percent of non-first-time (returning) sessions use that feature at least once.

The example table shows what the value is, a text summary, and an estimated distribution (for fellow statisticians, it’s just a simple beta-binomial posterior). From this high-level view, we can drill down a little bit and see, within a given Value, what specific actions people are taking when they return. It’s a good way to figure out what features people are actually using, or not using, so we can either focus on them, drop them, or try a new iteration on them.

With more example data, we can see that “support†includes commenting and liking (as well as additional actions as one scrolls down).

I see this as an actionable metric for the strategy level: It tells us what areas we should be focused on, once we’ve found something people are really responding to.

Of course, there’s definitely more refinement to be made here as we get beyond our early adopters: we should convert this over to cohort data, develop an estimate of where our baseline is, and see where the recent cohorts of users are as compared to that baseline. Very quickly, we’ll want to view these metrics based on where the users are coming from the referring site) and demographics, so that we can understand what different types of users find valuable. We may also want to include a per-user factor; right now these metrics are biased towards whatever power users like, rather than what the larger group of returning users likes, and this may not always be what we’re looking for.

But I think this is a good place to start: if you want to know why your users are coming back, measure what they do when they come back.

(This post is also cross-posted on the Grockit blog.)

Journey Log

Posted by thomaslotze in Projects on June 27th, 2011

We just finished what I’m calling the “alpha run” of journey log, a system for logging runners running Journey to the End of the Night/SurviveDC-style games. There were a fair number of data issues, but I think it’s a really cool combination of mobile app (Android and iPhone versions) for recording and web app for storing and visualizing runner patterns during the night. You can see visualizations of the checkpoints, chasers, and runners (with individual runners getting their own pages). The web visualizations are done with a rails/mysql backend, using flot, jit, and Google maps javascript libraries.

SFEdu Startup Weekend/DemoLesson

Posted by thomaslotze in Projects on June 9th, 2011

In short: we won!

I took part in the first Education Startup Weekend this past weekend, a great event where you try to create a viable startup over the course of 54 hours. You can read the NPR story here or a great writeup from one of my teammates here. My team created DemoLesson.com, a career listing site for teachers where every teacher has a teaching video, so that school administrators doing the hiring can get real information much earlier in the process. You can see a splash page here. The concept is pretty straightforward, but there’s a real need to be able to see teachers teach before flying them out for an in-person interview and demo lesson. I liken it to coding interviews for developers: you want to be able to see the person doing what it is you’re actually hiring them for. There was a lot of positive feedback from Teach For America, the charter school administrators we talked to, and the teachers we interviewed. The weekend was also a competition, and out of 14 competing teams, the judges thought ours was the most viable. It was a great team, a great experience, and a lot of fun.

The Dr. Who Postcard Project

Posted by thomaslotze in Projects on May 23rd, 2010

I just gave a short talk at Noisebridge’s 5 Minutes of Fame on a Dr. Who Postcard project; the code is open sourced on github. See more details at the postcard project page!